Training to win in the urban space is one of the greatest challenges for any military. Dismounted small units are the key force within the urban battlefield and how goes their large number of small actions goes the fight.1 For individual training the basics of close combat are achievable, however simulating the overwhelming intensity and visceral nature of Dismounted Close Combat (DCC) is more difficult. Collective training, with all the assets needed to win in this environment, is both extremely complex and hugely expensive. Building a mock city with the realistic physical features, let alone the population and infrastructure, that soldiers will encounter in actual combat; exceeds the resources of even the most funded military. For over fifty years military analysts, the defence industry, and technology experts, have argued that due to the costs of urban warfare training sites, the military must find alternatives using simulation.2 While pragmatic, this approach has followed the path of least resistance, not the path that will increase the likelihood of truly increasing combat capabilities. It has focussed on what is easy and cheap, not what is best; that is, using simple Virtual Reality (VR) environments to replicate training DCC skills already replicated in a physical training setting, rather than seeking to augment that which is practically challenging – complex combined arms (CA) training.

This article will show why improving urban training is critical, identify why this misstep has occurred, and propose the steps needed to correct the direction of travel and, as a result, improve both individual and collective skills.

What is the urgency?

With the number of urban areas doubling in the last 50 years, and with 77% of the global population now living in these areas, urban warfare is almost certainly going to be the vital ground of future conflict.3 David Kilcullen argues that the four megatrends that will shape future conflict are: population growth, urbanisation, littoralisation, and connectedness.4 Whichever reference you pick urban is universally agreed as playing a big part.5

Simultaneously all the signs and available documents advise that NATO’s future adversaries are likely to encompass a spectrum of state and non-state actors who have access to a range of more freely available militarised technology. As such NATO’s historical direct comparative advantage is already denuded, compounded by the fact that some NATO member states are reducing the mass available to their Armed Forces.6

The obvious imperative, in this era of increased urbanisation and competitive equality, is that we must seek to find where indirect comparative advantages exist and ruthlessly exploit them.

By means of an allegory, a study of the card game Gwent proves useful. This game, from the computer game Witcher III, sees users trade moves in an attempt to eradicate the enemy’s combat power in a direct like-for-like battle. Similar to the peer-to-peer operating environments of the future. Of note, however, is the way weather, morale, espionage, and leadership bonus cards can disproportionally affect the outcome of the round, and it is this type of force multiplication defence must find and exploit.

This exploitation, as predicted by General Krulak in his three block war theory,7 will require better-connected, more broadly trained junior commanders, capable of conducting a room entry one minute and then coordinating a complex CA strike the next. These junior commanders must be both trusted to conduct these actions but also furnished with the right training, equipment, infrastructure, and doctrine with which to hone, develop, and refine these skills in order to create the necessary overmatch.

What is wrong with training?

While hard to define lethality ratios often favour combined arms. As far back as the 1940s lethality ratios of small arms (SA) vs combined arms (CA) was 20% vs 60%.8 More recently some examples have seen 40% vs 50%.10 It is not that trainees spend too much time on their rifle, but that CA training is woefully under-resourced because it is difficult to do well.

Image 001 is a not unrealistic view of the number of multi-national assets a team commander could have at their disposal in the not-too-distant future. This means the gap between that which a soldier should be able to do and that which they are able to do, is only going to get bigger unless current training systems adapt effectively.

The issue, however, is not just the type and frequency of training. It is also overcoming the historical reasons why force elements are both so geographically split (especially along functional lines e.g. artillery and infantry being dislocated etc.) and stove-piped in their approach to training. For example, a brigade exercise in the UK could lose days of training just in the travel time. That’s before establishing the relationships needed to be successful.

If you follow the logic of this argument, the key focus of a sound training needs analysis, in order, should be;

- How do we deliver the best CA training available?

- How do we train collectively given geographic dislocation?

- How do we best use the finite training time available?

These questions show how the potential lack of direct competitive advantage could be overcome by investing resource in the often-neglected primary Defence Lines of Development (DLoDs11) of training and personnel. Refining, and focussing, the doctrine and then focussing on training could disproportionality uplift operational performance. Think back to how much better urgent operational requirements (UOR), such as the initial delivery of UAS, could have performed had they been delivered with a comprehensive training package; or even made available on pre-deployment training. Militaries are missing a big trick here. It is imperative, we invest more intelligently in the non-equipment aspects of capability to increase the speed, and quality, of the CA training.

We do this by analysing the training gaps then finding the best solutions for the resources available: ‘Operational/Doctrine Pull’. Not the current approach of finding high tech products and reverse-engineering a military need: ‘Tech Push.’ Therefore, the training Holy Grail is an answer to the question: “how can we improve and increase the frequency of training that already happens, while simultaneously making it much more complex and collective, when budgets are tight and time is fixed?” It might sound impossible, but it is not.

What has caused the current misstep?

To achieve this Holy Grail of needs-based training there must be a ruthless focus on the physical (power and infrastructure) architecture, first, and the synthetic architecture, second. To date, in both the UK and the US, the physical appears to be neglected in favour of the synthetic architecture through programmes such as Synthetic Training Environment (STE – US) and Single Synthetic Environment (SSE – UK).

The issue, and the potential reason for the lack of focus, is the lack of clear demand-based vision as to what SSE should actually do and why. While it is becoming increasingly understood that military training should blend the physical and synthetic environments to yield better results, this principle has not been applied seriously to urban training strategies to create, the optimal combination of;

- Physical, high fidelity, close combat and synthetic complex combined arms.

- Collective training through synthetic relocation.

- On-garrison training.

This lack of a clearly articulate vision combined with Defence’s perpetual desire to be “innovative” has led to a vacuum into which non-directional ’low’ fidelity solutions have emerged. Both “pushed” from industry and “pulled” from defence. This vacuum has been compounded as, with no formal top-down requirement, users have built their own requirements from self-identified gaps. However, do these gaps address the cure or merely the symptom? If the latter, then we are actually aggravating the issue by mis-spending money to overcome it.

This top-down way of thinking, aligned with strategic operational doctrine, would allow Defence to get ahead of the potential issue of “post hoc ergo propter hoc”12 where unrequired capabilities are purchased because they are perceived to have been what was wanted as the requirement followed the initial capability delivery.

Which brings the article to the concept of Virtual Reality in DCC training where, we contend, that the symptom (insufficient training resource) has led to a user-defined capability, rather than identification of the disease (inadequate and insufficient training facilities).

What is the issue with Virtual for DCC?

Virtual’ [reality – VR] is one of the components of the trinity of live-virtual-constructive experimentation approaches commonly used by Defence communities to test ideas and underpin capability development. One must first explain that virtual simulations could use any “portal” such as virtual reality, augmented reality or mixed reality. A virtual (reality) simulation uses:

“real people in a simulated environment using simulated equipment. Human machine interface can include mock-ups of real equipment.”13

Furthermore, live (simulation) uses real soldiers in an exercise environment and constructive refers to use of computerised combat models. All three approaches have genuine utility for force development; however all analytical tools have their strengths and limitations – best analytical practices therefore tend to use multiple tools in order to corroborate findings.

Commercial computer games (in the virtual reality sphere) and simulations are both designed to ‘model’ combat, but in different ways, to meet very different requirements. Games are designed more for sensory immersion – to look good; simulations are designed to try to represent combat ‘interactions’ to underpin decision making. Cue George Box: “All models are wrong, some are useful.”14

If we cannot access the so-called ‘vulnerability and lethality’ (V&L) data that underlies such models, then how do we know these games and simulators are helping learn the right lessons

Box’s point is that any model is an imperfect representation of something, designed for a purpose and deficient in different ways. Many models of course are sufficiently close to reality to be useful, such as a wargame being used as a critical thinking tool to explore military courses of action.

However, anyone who has done a bit of soldiering can tell you that it is visceral, chaotic, exhausting and well “lung-bustingly, gut-wrenchingly, head-poundingly real”. Arguably real urban operations (MOUT, OBUA, MUC15 or whatever you choose to call it) are at the apex of this DCC ‘reality.’ VR just does not even get close to representing this environment at the moment, but it has started to be used because it is cheap, access to training estate is either too hard or expensive, and because there has not been an objective analysis of the options against training need.

VR misses the mark because:

Hardware: While the concept of VR headsets has been around since the late 1960s it was only in 2012 that the firm Oculus started to modernise the concept. Given that relative infancy, there are significant shortfalls when it comes to enterprise simulation and its requirement for high fidelity rather than conventional entertainment. The result of some of these shortfalls are outlined below.

Software: For commercial gaming software, there tends not to be open access to data and algorithms that sit behind the game, which govern how entities interact (e.g. people, vehicles, weapons and targets). So what? – it can be difficult to ‘validate’ that a specific commercial game is sufficiently accurate in terms of the physics of combat, and therefore useful for analysing certain aspects of combat. For example shooting someone wearing body armour.

If we cannot access the so-called ‘vulnerability and lethality’ (V&L) data that underlies such models, then how do we know these games and simulators are helping learn the right lessons? The answer is – we use them appropriately within the scope of their design and known limitations, then we look to corroborate the findings with other tools.

What is this V&L data you speak of?

Example: ballistic data relating to how a projectile flies through the air and the degree to which it penetrates the thing it hits. For this simple example, there are a multitude of physical factors that govern the outcome of this weapon-target interaction e.g. velocity, spin rate, ballistic coefficient, projectile mass, environmental conditions. The more complex the weapon (e.g. missile) and target (e.g. tank), the more complicated it gets.

Government-owned simulations usually get this kind of data from empirical trials or historical datasets. Good V&L data is therefore expensive to collect and usually highly classified. While Defence has worked hard to provide this data, where possible, there will always be shortfalls in availability. As such commercial gaming companies therefore have to use open-source data or approximations based on judgement, as to what appears realistic.16 This is a fundamental characteristic of VR for combat often overlooked. You can get away with this approach in games, but less so In combat simulation.

Okay, nice narrative, but what about the detail?

Beyond their gaming utility virtual simulations are useful for examining broad courses of action, analysing the effects of tactics, techniques and procedures (TTPs) and providing judgement-based training for example. They are not designed to test physical detail of weapon-target interaction, as finite element analysis models do; nor is it possible to objectively determine the degree to which the physical data coded into these games is sufficiently accurate to do this.

Consider the following components of dismounted close combat:

Shooting: An average assault rifle (by itself) can achieve a <1 MOA17 group at 100 yds (1” or 25mm). British soldiers (behind an average assault rifle) are required to achieve a 100mm group (~4 MOA) at 100m during a static marksmanship test.

During live fire tactical training (LFTT) British Infantry are roughly 80% less effective at hitting targets than a traditional static marksmanship test.18 Moreover, historical research of combat indicates that ‘participation’ rates of infantry in major combat operations may be as low as 15%.19

So, unless your virtual reality simulator has taken account of all this, then it is quite possible that a soldier using it could build up a false expectation of their ability to hit a target on operations. Especially when you are not even shooting a replica of the in-service fire arm but two controllers that in no way replicate the reality.

Suppression: Suppressive effects of weapons are well-known but not well understood. The NATO standard for incapacitation and suppression states:

Suppression is driven by a combination of psychological (e.g. cognition, emotion, behaviour) and physiological processes contributing to a perception that continuing with the assigned task would lead to harm. The intensity and duration of suppression is partially determined by the proximity, rate, type and weight of fire; while less quantifiable human factors (combat experience, training, leadership, group cohesion) influence the impact of suppressive fire intensity and duration on an individual or group.”

Virtual simulators are simply not able to induce anything more than very minor suppressive effect on player behaviour because their lives are not in danger or, at the very least, there is little prospect of pain.

So what? Suppressive effects in close quarter battle (CQB) can have considerable effects on behaviour and therefore individual or team effectiveness. So, if virtual simulators cannot simulate this element, it may not be the right tool for learning real CQB lessons for example. No decent military instructor ever said: “Train easy, fight hard.”

Physicality: The basics of fire and manoeuvre are hard work, doing it at the speed needed to increase survivability on the battlefield is intensive and exhausting. The mechanics of moving and stopping are not what make this skill hard, it is lifting the weight of the equipment on every bound and changing a magazine with respiratory rates accelerating and sweat pouring. Given the variance and complexity behind human physical performance it is unlikely that, without bespoke data models for every user, virtual joysticks will ever replace this.

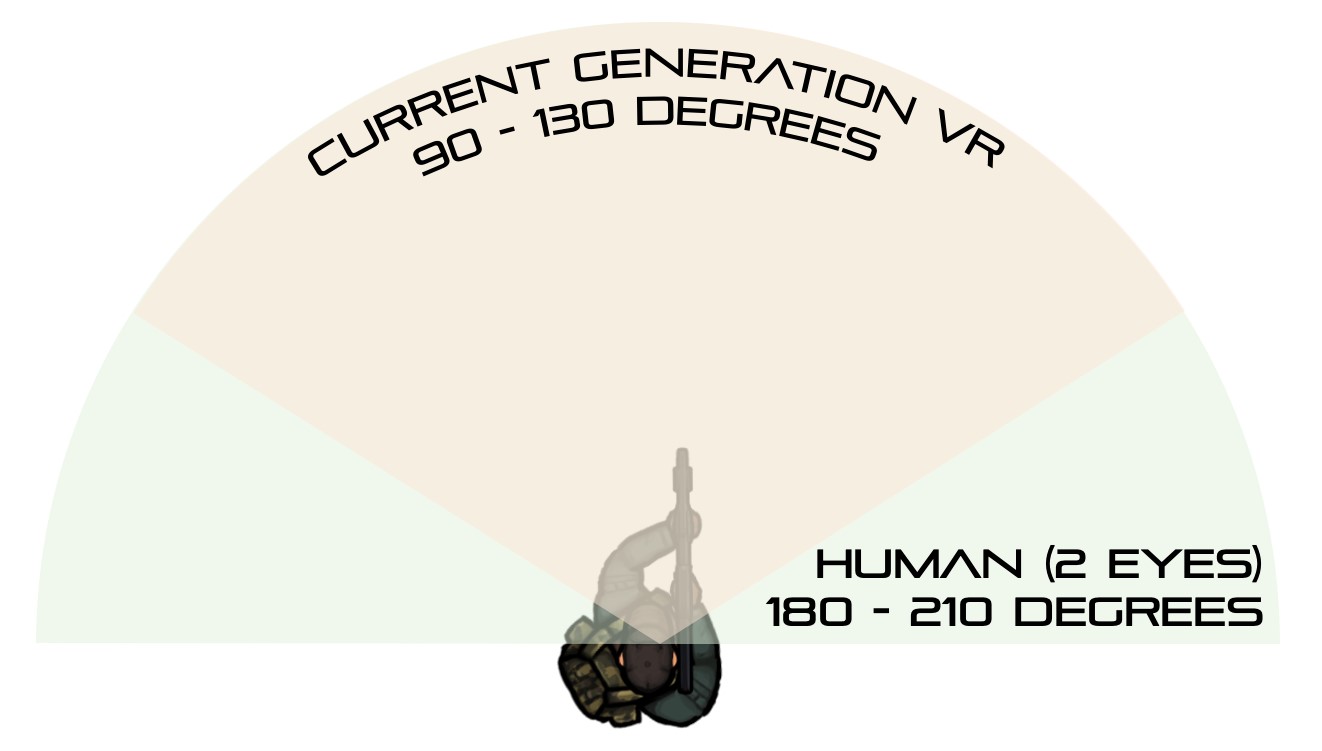

Situational Awareness (SA): Use of a VR head mounted display fundamentally inhibits an individual’s local situational awareness (as per image 002) to some degree – whether that is extent of peripheral vision, body disconnect, hearing interface etc. The trade-off with this reduction in individual awareness is that simulations are able to augment vision with informatics overlays for soldiers to have better SA of the simulated battlespace.20

Image 002: Peripheral vision in VR

Such augmented reality features are better for those in a longer-term command and control position away from the tactical space, but not suitable for the acute close battlespace environment of dismounted combat. Head down for planning, head up for a fight. Given these fundamental truths, surely replicating reality for dismounted combat as closely as possible is the best of all worlds.

So can it work?

Despite much hype and progress over the last 10 years, VR is still not suitable for practicing manual tasks, such as weapon drills, where detailed interaction with specific objects is required. User interface technology is not sufficiently close to the real thing. Replicating the acute stressors of a DCC environment is a big ask, even for today’s technology.

VR systems are not useless, they just need to be used appropriately and their limitations understood. Before any [VR] capability is procured, systems benefits should be assessed to determine that it is fit for purpose and the limitations sufficiently understood. In short, do the benefits of the technology more than overcome the additional cost incurred against how training is currently conducted? If not it should be kept in the physical. Without this, militaries will continue to misuse and/or misunderstand the benefits and limitations of VR.

That in mind, where a potential perfect balance of urgent capability gap against realistic technological capability could exist is in CA simulation. This is because the fidelity requirement is lower (radios and tablets), the cost of output is higher (compare the cost of a sidewinder against a 5.56 round) and the requirement is critical to ensure competitive advantage in the contemporary operating environment.

So what is the answer?

Defence must now focus on simulating that which is too hard, too dangerous, too costly and that which will offer genuine competitive advantage against adversaries. To do otherwise is to waste money on that which can be done better in the physical and that offers only marginal benefits to operational performance.

The strategic intent of the SSE is correct. Namely the future of training must be in the blending of the synthetic and physical to enable affordable complex CA training scenarios. This mix, however, should focus on ensuring the type of training is done in the optimal environment (virtual or real). This is likely to mean that the SSE starts primarily physical then transitions gradually to virtual as the technology improves. The three core themes, that tie back to the initial 3 questions, from this article are:

- Operational Requirements needs must lead “innovation”. The current operating environment is becoming more complex than it has ever been against a political era of cost, size, and scrutiny.21 Given potential adversaries’ budgets are disproportionately increasing, we must focus on non-traditional force multipliers rather than just evolving that which we already do well22 This focus on CA, rather than SA, could lead to unforeseen capability benefits by disrupting the vertical silo approach to both UK and US procurement and innovation 23 Imagine a dismounted soldier using an Air Force UAS to co-ordinate naval gunfire support, a realisation of General Krulak’s vision of a corporal creating strategic effect through their ability to utilise both operational and strategic assets.

- Technological readiness must overcome human ambition. Virtual training within the DCC environment is highly likely to be one of many long-term tools. However as we have shown, its benefits do not outweigh its current limitations. Whereas CA simulation’s benefits vastly outweigh its costs. Similarly dislocated network-based training is ready now, and able to overcome geographically dislocated units. Imagine the prior example of the dislocated brigade being able to conduct synthetic joint exercises from their own garrisons rather than having to geographically co-locate. Militaries must both prepare for it and ensure achievable cyber standards are created to enable it.

- Individual troops must be at the centre of everything. When considered as individuals, with specific skills and qualifications, rather than as a collective unit, one realises the sparsity of capability within the DCC environment. There may only be 1 person within a platoon that can co-ordinate a mortar strike etc. It is only when aggregated that all the necessary CA capabilities are enabled. This must change and we must consider each soldier individually. Everything, and anything, we can do to improve CA capability must be done. A soldier’s ability to co-ordinate an artillery barrage will improve their overall lethality disproportionately more than a 5mm better grouping at 100m. To achieve this within everyday resource constraints is the challenge and therefore holistic mixed training infrastructure must be built where the soldiers are located (garrison not centralised) and exercises must test both individual and collective skills simultaneously to achieve better use of finite time.

The future is bright with the SSE, and not just limited to training (imagine being able to test, iterate and refine programmes such as the US Army’s Integrated Visual Augmentation System (IVAS) rapidly through on-garrison facilities). It must, however, be focussed on what is actually needed rather than what visually seems “innovative”. VR may well be the long-term future of DCC training however it is not currently a useful augmentation of how DCC training is generally conducted now, whereas with synthetic CA that future is waiting to be exploited.

If militaries follow the principles laid out in this article and needs-based small teams urban training is augmented appropriately by the right technology, then the next generation of close combat teams may well yet have a disproportionate effect in the highly-urbanised operating environment of the future.

Footnotes

- Dr David Kilcullen, Out of the Mountains

- RAND, Combat in Hell: A Consideration of Constrained urban Warfare. 1996. https://apps.dtic.mil/dtic/tr/fulltext/u2/a319849.pdf

- https://ec.europa.eu/eurostat/web/degree-of-urbanisation/background

- Out of the Mountains, the Coming Age of the Urban Guerrilla, Kilcullen, D., 2015.

- https://www.gov.uk/government/news/the-future-of-our-cities-dstl-publishes-new-report-on-the-future-of-urban-living

- https://www.gov.uk/government/publications/defence-in-a-competitive-age

- https://en.wikipedia.org/wiki/Three_Block_War

- https://www.jstor.org/stable/44227165

- https://smallwarsjournal.com/documents/urbancasstudy.pdf[/note] Whatever the ratio, current infantry training sees a disproportionate focus on SA over CA (at best a 1 to 50 ratio from time spent learning about the rifle to time spent learning about CA during basic training).9While understood that basic training is not where CA is normally focussed the ratios of time can be easily measured.

- In US parlance: the Joint Capabilities Integration Development System – JCIDS.

- Latin: After this, therefore because of this.

- Land Handbook Force Development Analysis and Experimentation Handbook, Virtual Reality, UK MoD, 2014.

- https://en.wikipedia.org/wiki/All_models_are_wrong

- Military Operations in Urban Terrain, Operations in Built-Up Areas, Modernised Urban Combat.

- https://discovery.nationalarchives.gov.uk/details/r/C11064782

- Minute of Angle.

- So how well can we shoot? Stewart and Lowe, Combat Journal, UK MoD 2016.

- Brains and Bullets, Leo Murray 2013.

- https://bisimulations.com/company/news/press-releases/vbs3-updates-coming-new-military-simulation-capabilities

- https://www.bbc.co.uk/news/uk-56477900

- https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/991587/20210521_DE_S_Org_chart_2021.pdf

- https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/991587/20210521_DE_S_Org_chart_2021.pdf